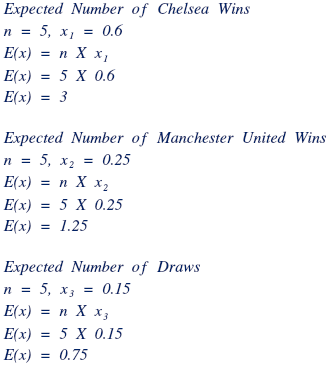

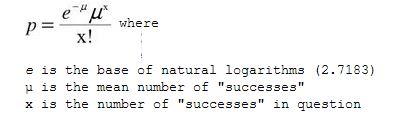

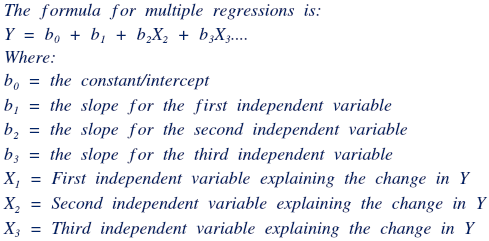

Throughout Chapter 8, the normal distribution was looked at when assessing situations, hypotheses and probabilities. However, the X2 distribution, known as the chi-square distribution is just as effective as the normal distribution. The X2 (chi square, pronounced kai-square) distribution is a continuous probability distribution that is widely used in statistical inference. In fact, it is closely related to the standard normal distribution; in that if a random variable Y has the standard normal distribution then Y2 has an X2 distribution with exactly one degree of freedom. Similarly, if Z1, Z2, …, Zk are independent standard normal variables, then Z12 + Z22 …+ Zk2 has an x2 distribution with exactly k degrees of freedom. For the x2 distribution, the mean is equal to the degrees of freedom, while the variance is twice the degrees of freedom. As you see a visual representation, you will notice that as the degrees of freedom increase, the x2 distribution will start looking like the normal distribution. Furthermore, the x2 function with one degree of freedom is largely positively skewed, but as the degrees of freedom increases, the skew-ness of the graph begins decreasing. The chi-square is a statistical test, which is commonly used to substantiate the null hypothesis, or to affirm the alternate hypothesis. It is often used when trying to determine the “goodness of fit” between the observed and recorded pieces of data and the expected outcomes (empirical vs. theoretical). Should there be a large difference between the expected and actual data; a large Chi-square value will result, indicating that there is a significant difference between the original hypothesis and the actual data. Before I lose you as a reader in this annihilation of words, (I hope I still have your undivided attention at this point), let me show you an example pertaining to the goodness of fit for a binomial distribution.

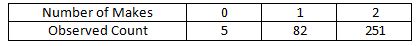

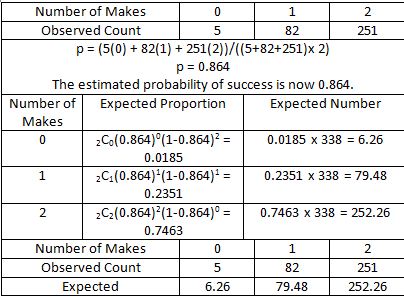

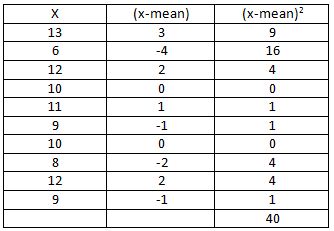

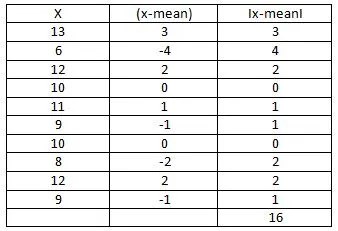

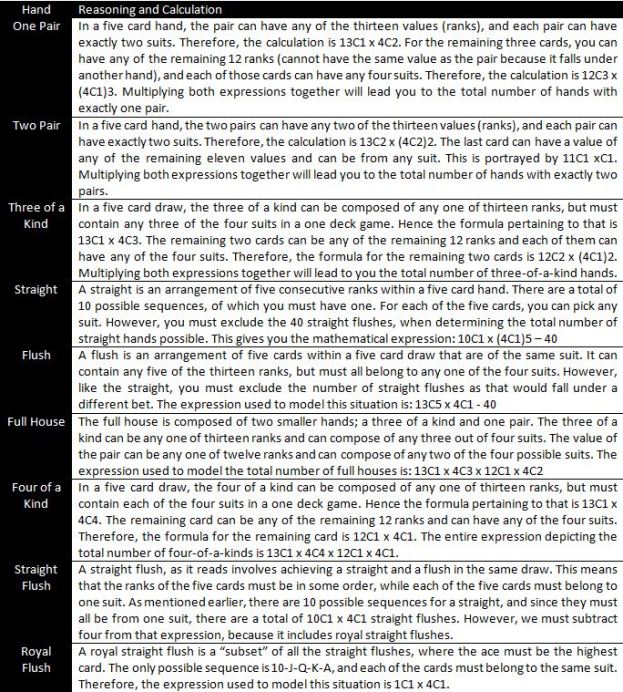

In several instances, we can use an x2 test to verify or disprove the null hypothesis that the data comes from a specific parametric distribution (e.g. Binomial, Poisson, normal). As an example, suppose that a sports journalist claims that Michael Jordan’s free throw successes follow a binomial distribution with a success rate of 80%. Assume that the observed data over two seasons is as shown in the table below (take into consideration that the total number of free throw pairs is 338, and that each attempt consists of two free throws).

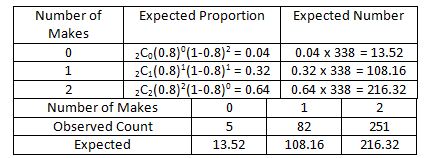

In words, on five occasions Michael Jordan missed both free throws during the two seasons, while scoring one out of two free throws on 82 occasions. He did also score both free throws on 251 occasions. In this instance, the H0 is that Michael Jordan’s number of successes on two free throws follows a binomial distribution with a success rate of 80%; while the alternative hypothesis claims that the distribution differs from the null hypothesis in some manner. There are two situations in which the null hypothesis could be discredited; a) binomial distribution is reasonable, but the probability is wrong, or b) the binomial distribution is incorrect because free throws are not independent, but the probability of success is corrected. Now, let us calculate the expected proportion and the expected numbers if the null hypothesis was true.

Through the table shown above, we can see that there are several occasions where the expected value differs from the observed value. Based on the hypothesis, it was expected that Michael Jordan would only make 216.32 pairs of free throws; however, in reality he made 251. Similarly, the values between the expected number (based on the hypothesis) and the theoretical values differ. Through the naked eye, it is easy to point out that differences are witnessed in the two sets of data. However, is the difference statistically different? Can we reject the null hypothesis? Instead of using the normal distribution, we proceed to test the hypothesis using the chi-square method.

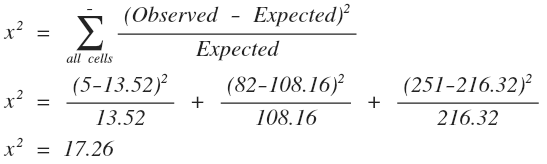

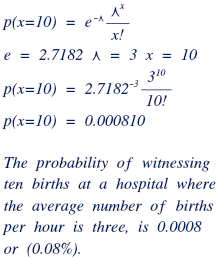

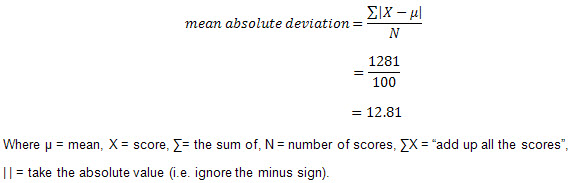

The formula for determining the chi-squared is as follows: the values that are observed are subtracted by the values that are expected, and then squared. The expected number then divides that expression. The formula is applied over all cells (in this case, 0, 1, 2 makes).

Through this, we can see that the chi-squared test statistic is equal to 17.26. Subsequently, the “p” value must be obtained. To begin with, the degrees of freedom are required and are obtained by subtracting one from the total number of cells (in this case, 3). That makes the division of freedom equal two. There are two ways in which we can calculate the “p” value; we can either draw the chi-square distribution with a degree of freedom of two, or then look for the values based on our chi-squared test statistic, or we can use an online calculator. Take into consideration that the larger the value of the test statistic, the greater the evidence against the null hypothesis. The “p” value is the probability of attaining values greater than the chi-squared test statistic (to the right of the value, on the graph). The larger the value, the harder to prove the hypothesis insignificant and inaccurate. In this instance, the “p” value generated by an online calculator is 0.00017866; it indicates a strong rejection towards the null hypothesis. Because we have obtained incriminating evidence that the hypothesis is not true, our work remains unfinished. As stated above, there are two possibilities in which the hypothesis can be incorrect, and it is necessary to test which of the possibilities is the underlying reason why the null hypothesis was rejected.

In practical situations, the ambiguous nature of the parameters is prevalent, and often, the available data is required to obtain these parameters. So for this case, let us assume that the parameters are not provided, and that we have to form them. For now, the null hypothesis can be reworded to state, “Michael Jordan’s number of successes on two free throws follows a binomial distribution” (notice that the statement does not contain any success values). Let us then, estimate the probability that he made free throws.

After calculating the expected numbers based on the estimated probability that he made sets of free throws, we can immediately notice the close nature of the expected and observed count of Michael Jordan’s free throw shooting over two seasons. Similar to the previous situation, we must calculate the chi-squared test statistic based on the new set of values. In this instance, the value of the test statistic is 0.34. Once again, the “p” value is required to determine whether the null hypothesis can be accepted or not. Therefore, there is a need to determine the degrees of freedom for the data set. While it may be quite enticing to say that the degree of freedom is two, there is a slight catch. Because we estimated the initial parameter for the data (finding p = 0.864), we have lost an extra degree of freedom. Therefore, the degree of freedom for this instance is one. After using the online calculator to find “p”, or plotting the chi-square distribution with one degree of freedom, you will find that the value of p (area under the curve to the right of 0.34) is approximately 0.56. Since this is a large p-value, it indicates a strong approval for the null hypothesis. In conclusion, the null hypothesis was tested and approved as noticed through the large p-value because there is no evidence that Jordan’s true distribution of successful free throws differs from the binomial distribution.

In this way, the chi-squared method of either accepting or rejecting hypotheses is quite useful, given a set of data and a null hypothesis. The nature of the chi-squared method is similar to the other methods of verifying hypotheses, and offers an alternative manner to prove the same point.

Hope you as a reader, enjoyed reading all eight posts about Data Management in Mathematics. For now, this is the last post in the series, however, stay put for more in the future. Happy Reading 🙂

Before signing off, a special shout out to the following people; the creators of Daum Equation Editor (the program is a lifesaver), my entire Data Management class, Ms. Mark (my Data Management teacher), WordPress and all you readers, followers, commentators and math enthusiasts out there.

Peace out xx

References:

http://www.colby.edu/biology/BI17x/freq.html

http://www.danielsoper.com/statcalc3/calc.aspx?id=11

http://statistics.unl.edu/faculty/bilder/categorical/Chapter1/Section1.2.pdf

http://www2.lv.psu.edu/jxm57/irp/chisquar.html

http://www.youtube.com/watch?v=O7wy6iBFdE8